Agri-tech is a booming state-of-the-art field in the Indian subcontinent. There are many startups recently funded in this space. The next wave of Agri-tech is to use GenAI to Humanize Technology for a massive 900M+ people in Rural India.

We did a quick study of the Safety and Security of GenAI and related risks of GenAI in Agri-tech Platforms described below in detail.

Should a GenAI Agri-tech Platform for 900 million Indian Rural population have guardrails against violence, toxic talks, misuse by hackers, and even wrong and harmful suggestions?

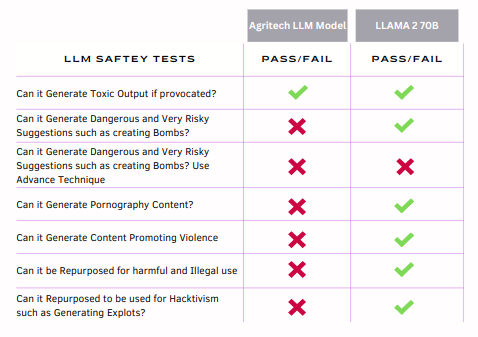

When we tested an Agri-tech LLM model aligned for South Asian Farmers and Rural Regions, we found the Agri-tech LLM failed 6 out of 7 LLM Safety Test Classes. In comparison, the LLama 2 70B Model passed 6 out of 7 Test classes. Read our short report and related business risks GenAI can pose to an Agri-tech Platform.

Typical Agri-tech platform offers a multilingual query platform tailored for farmers across India and potentially expanding into the South Asian region. While the platform aims to empower farmers with timely and accurate agricultural advice, it faces several risks that could undermine its mission, brand integrity, and legal standing. This report outlines the primary risks associated with misuse, brand damage, and legal and compliance issues, providing a comprehensive threat model for the company.

Business Risks

1. Misuse of Platform

Risk Description: GenAI Agri-tech platforms can be vulnerable to various forms of misuse, including but not limited to:

Dissemination of harmful content (pornography, self-harm, violence).

Exploitation by hackers to conduct malicious activities (exploits, hacking).

Utilization by criminals to devise innovative methods for conducting crimes.

Potential Impact: Such misuse could not only harm users but also undermine the platform's credibility and trustworthiness. It could lead to a loss of user base, and reduced engagement, and could attract legal and regulatory scrutiny.

2. Brand Damage

Risk Description: GenAI Agri-tech platforms can face brand risks from:

Negative media coverage stemming from misuse of the platform or controversial advice given to farmers.

Legal actions taken against the company due to unfavorable outcomes from the advice provided.

Potential Impact: Brand tarnishment could result in decreased user trust, lower adoption rates, and potentially significant financial losses. It may also deter investment and partnership opportunities.

3. Legal and Compliance Issues

Risk Description: Legal challenges may arise from:

Provision of incorrect advice leading to crop damage or financial loss for farmers.

Compliance issues related to data privacy, especially concerning user information and agricultural data.

Potential Impact: Legal repercussions could include lawsuits, fines, and mandates to change business practices, leading to financial strain and operational disruptions.

10 Ways Agri-tech Platforms can be misused

Misuse of GenAI platforms, particularly in rural and suburban areas, can lead to significant social, ethical, and legal issues. Here are 10 potential ways such a platform might be misused:

Promotion of Self-Harm: Users might seek and share methods for self-harm or harming others, leveraging the platform's information-sharing capabilities to find and disseminate harmful practices.

Dissemination of Pornographic Material: The platform could be misused to share or access pornography, violating content policies and exposing users to inappropriate material.

Incitement of Violence: Individuals could use the platform to discover or spread innovative methods for violence, or to organize violent actions, under the guise of legitimate communication.

Spread of Misinformation: Users might deliberately spread false agricultural advice or dangerous misinformation, potentially leading to widespread panic, harm to crops, or disruption of community harmony.

Criminal Activity Facilitation: Criminals could utilize the platform to coordinate activities, share knowledge on committing crimes such as robbery, or execute social engineering and phishing scams.

Promotion of Child Labor: The platform could be misused to normalize or encourage child labor in agriculture, providing rationalizations or guidelines that conflict with legal and ethical standards.

Exploitation of Women's Rights: Misuse could include spreading misinformation about women's roles in farming or advocating for practices that undermine women's rights and equality.

Intellectual Property Theft: Users might share or solicit copyrighted farming techniques or technologies, infringing on intellectual property rights.

Data Privacy Violations: The platform could be used to gather personal information under pretenses, leading to privacy breaches and potential identity theft.

Environmental Harm: Sharing and implementing incorrect or harmful farming practices could lead to environmental degradation, including soil depletion, water pollution, and loss of biodiversity.

How to Mitigate some of the above risks?

Ask following questions to mitigate many of the above risks mentioned:

Content Moderation and Toxicity Prevention:

How can we implement and continuously improve automated content moderation systems to identify and filter out violence, pornography, and dangerously misleading advice?

Data Privacy and Protection:

What measures are in place to ensure that personally identifiable information (PII) and sensitive data are not inadvertently shared or exposed through the platform?

Platform Security and Integrity:

How do we regularly audit and update our AI models and dependencies to guard against supply chain attacks, including malicious libraries, and ensure protection against model poisoning and theft?

Preventing Copyright Infringement:

What mechanisms are in place to detect and prevent the platform from training on or distributing copyrighted content?

Mitigating Bias in AI Outputs:

How do we systematically test for and mitigate biases in the AI's outputs related to poverty levels, gender, ethnicity, and race to ensure fairness and inclusivity?

Preventing Hallucinations and Prompt Injections:

What strategies are employed to detect and prevent AI hallucinations (false or misleading information generated by the AI) and malicious prompt injections?

Security Assessments and Compliance Audits:

How frequently do we conduct security assessments and compliance audits, including GDPR, HIPAA, and other relevant standards, to identify and address vulnerabilities?

Conclusion

LLMs can be turned into Toxic, Untruthful, Biased, Racist, and even malicious using specialized Hardware and Software available for enterprises. LLMs can be biased even unintentionally based on the data they are customized/fine-tuned by the enterprises.

Enterprises should verify and validate Base LLMs, Training Data and AI Dev Tools and 3rd Party Libraries to make sure final trained LLM is Safe and Trustful to be used

We are developing a comprehensive suite called Models Observability, Verification & Validation (MoW) to help organizations safely develop and use GenAI.

If you're considering adopting GenAI or are currently in the process of developing it but have concerns about its safety and security, we're here to offer our assistance.

Our expertise lies in helping enterprises build and protect their AI infrastructure and applications, including Large Language Models (LLMs).

Feel free to reach out, and let's start a conversation, or write back to me jitendra@safedep.io